About the Provider

Qwen is an AI model family developed by Alibaba Group, a major Chinese technology and cloud computing company. Through its Qwen initiative, Alibaba builds and open-sources advanced language, images and coding models under permissive licenses to support innovation, developer tooling, and scalable AI integration across applications.Model Quickstart

This section helps you quickly get started with theQwen/Qwen3-VL-8B-Instruct model on the Qubrid AI inferencing platform.

To use this model, you need:

- A valid Qubrid API key

- Access to the Qubrid inference API

- Basic knowledge of making API requests in your preferred language

Qwen/Qwen3-VL-8B-Instruct model and receive responses based on your input prompts.

Below are example placeholders showing how the model can be accessed using different programming environments.You can choose the one that best fits your workflow.

Model Overview

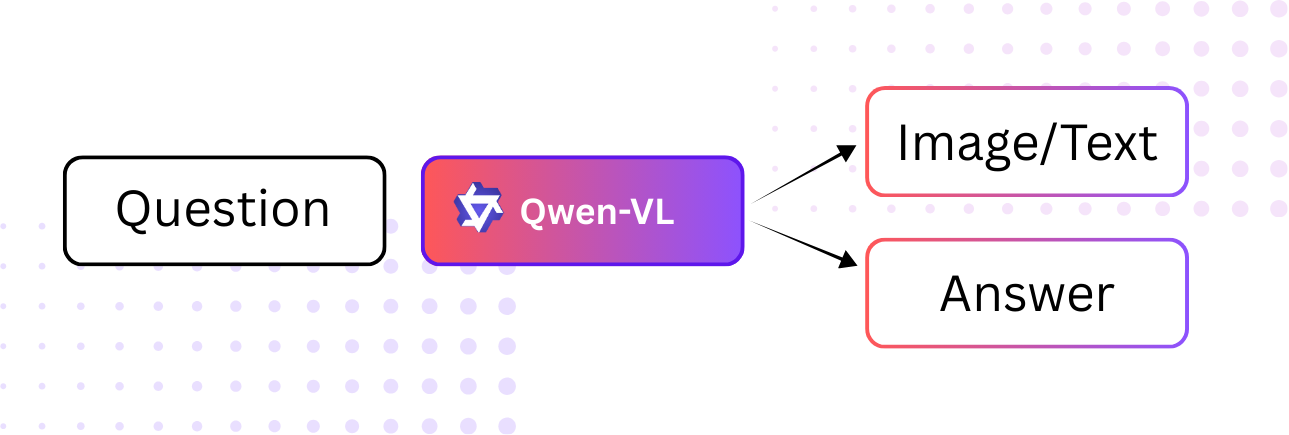

Qwen3 VL 8B Instruct is a vision-language instruction-tuned model designed to understand and reason over both text and images. It supports OCR, streaming responses, and rich multimodal conversations, making it suitable for vision-language inference workflows that require text–image understanding rather than content generation. The model focuses on strong visual perception, spatial reasoning, long-context understanding, and multimodal reasoning while remaining accessible for deployment across different environments.Model at a Glance

| Feature | Details |

|---|---|

| Model ID | Qwen/Qwen3-VL-8B-Instruct |

| Provider | Alibaba Cloud (QwenLM) |

| Model Type | Vision-Language Instruction-Tuned Model |

| Architecture | Transformer decoder-only (Qwen3-VL with ViT visual encoder) |

| Model Size | 9B |

| Parameters | 6 |

| Context Length | 32K tokens |

| Training Data | Multilingual multimodal dataset (text + images) |

When to use?

Use Qwen3 VL 8B. Instruct if your inference workload requires:- Understanding and reasoning over images and text together

- OCR across multiple languages with structured document understanding

- Visual question answering and image captioning

- Multimodal chat with streaming support

- Spatial reasoning and visual perception without image generation needs

Inference Parameters

| Parameter Name | Type | Default | Description |

|---|---|---|---|

| Streaming | boolean | true | Enable streaming responses for real-time output. |

| Temperature | number | 0.7 | Controls randomness in the output. |

| Max Tokens | number | 2048 | Maximum number of tokens to generate. |

| Top P | number | 0.9 | Controls nucleus sampling. |

| Top K | number | 50 | Limits sampling to the top-k tokens. |

| Presence Penalty | number | 0 | Discourages repeated tokens in the output. |

Key Features

- Strong Vision-Language Capabilities: Handles text and image understanding in a unified manner

- Multilingual OCR: Supports OCR in up to 32 languages with improved robustness

- Long-Context & Video Understanding: Designed for extended context reasoning within the Qwen3-VL family

- Streaming Support: Enables fast, incremental response generation

- Advanced Spatial & Visual Reasoning: Understands object positions, layouts, and visual relationships